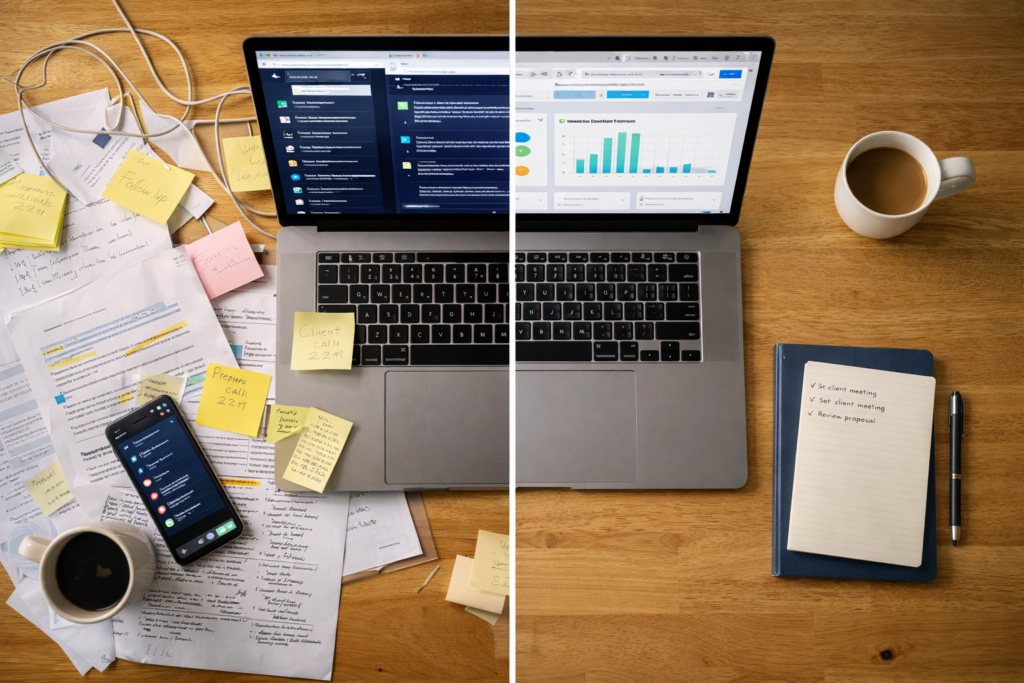

AI activity without business improvement is one of the most common outcomes in the first 60 days — and one of the hardest to diagnose because the motion feels real.

Three weeks after rolling out an AI writing assistant, the marketing director showed me their dashboard. Usage was up 340%. Draft volume had doubled. The team was engaged. Then I asked what disappeared. Silence. Nothing had stopped. No meetings ended. No approval chains shortened. No bottlenecks cleared. They’d automated the easy part of a broken process and called it progress.

This is the pattern that kills most early AI efforts. Not because the tools failed or people resisted. Because teams mistake activity for improvement and never course-correct. AI creates visible motion immediately. Drafts appear. Summaries generate. Responses move faster. Output goes up, so it feels like something’s working. But output was never the problem. The problem was always the work that shouldn’t exist in the first place.

That marketing team automated email drafts, but the approval process still required three sign-offs and two revision rounds. They summarized meeting notes faster, but the meetings themselves still ran long because no one had clear decision authority. They generated more content ideas, but the editorial calendar was already overloaded and nothing got cut. AI made every step faster. The system stayed just as heavy. People felt productive. The director felt modern. Nobody felt relieved.

Relief is the only metric that matters early. Not adoption rates or engagement scores or tool usage. The only questions worth asking at the 30-day mark are subtraction questions. What meetings ended? What approvals disappeared? What hand-offs collapsed? What decisions moved without escalation? If those answers are “none,” you added cost, not leverage.

Most teams skip the audit because it feels like killing momentum. Leaders want visible progress. Teams want to keep experimenting. So they layer AI onto more workflows without fixing the first one. Six months later, they’re using twelve tools, everyone’s busy, and the CEO asks why nothing feels different.

The discipline nobody wants to enforce is the simplest one: decide what work should stop before you automate what stays. That marketing team never made that decision. They assumed AI would reveal what to cut. It didn’t. AI doesn’t edit your workload. It amplifies whatever you point it at. If you point it at unnecessary work, you get more unnecessary work done faster.

Pick one thing you’re using AI for right now. Ask yourself what part of the workflow around this task exists only because it’s always been done that way. Maybe it’s the review step that adds no value. Maybe it’s the update meeting that just confirms what everyone already knows. Maybe it’s the formatting requirement nobody actually reads. Remove it this week. If you can’t identify a single step to eliminate, you’re automating noise.

I’ve seen this play out dozens of times. A team adopts AI with genuine intent. They train people. They pick solid tools. Thirty days later, everyone’s using it. Sixty days later, nobody feels different. The assumption is always the same: we need better tools, more training, a clearer strategy. The real issue is simpler. They never decided what work should stop. They added capability without subtracting obligation. That doesn’t create leverage. It creates the same exhaustion, just faster.

Every conversation I have with business owners follows the same arc. They tried AI. It worked technically. But there was AI activity without business improvement — usage went up, relief never came. They assume they picked the wrong tool or didn’t train well enough. The issue is they never decided what deserved to survive. AI can’t make that call. It’ll optimize anything you ask it to. Your job is to decide what work is worth doing at all, then point AI at that. Everything else has to go first.

Stop expanding. Audit what changed. If nothing structural improved, pause everything until you know why. Don’t add another workflow. Don’t try another tool. Don’t scale what isn’t working. Diagnose the gap between activity and relief. Close it before you move forward.

The TAKTOS Business Check is a structured diagnostic that identifies the gap between what your AI tools are doing and what your business actually needs. Learn more at taktos.ai/businesscheck.

Chuck Rayman is the founder of TAKTOS, an AI advisory and education firm for small businesses. TAKTOS helps owners determine where AI will deliver real value and where it will not. Visit taktos.ai.