TAKTOS | AI Strategy for Small Business | taktos.ai

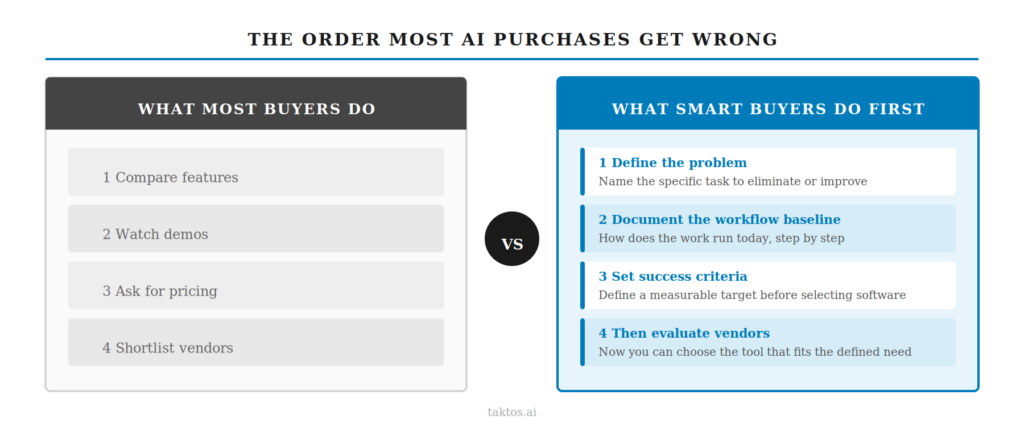

Most AI purchases start in the wrong place.

A business owner hears about a tool from a peer, watches a vendor demo, or reads a list of recommendations. They compare features and pricing. They pick one. Then, six months later, the tool is barely used and the problem that prompted the search is still there.

This is not a vendor problem. It is a sequencing problem. The business skipped the diagnostic work that makes any tool decision sound.

According to BCG research covering 1,000 senior executives across 59 countries, 74% of companies have yet to show tangible value from their AI investments. (BCG, Where’s the Value in AI?, October 2024)

The issue is not a lack of tools. It is a lack of readiness before the tool conversation starts.

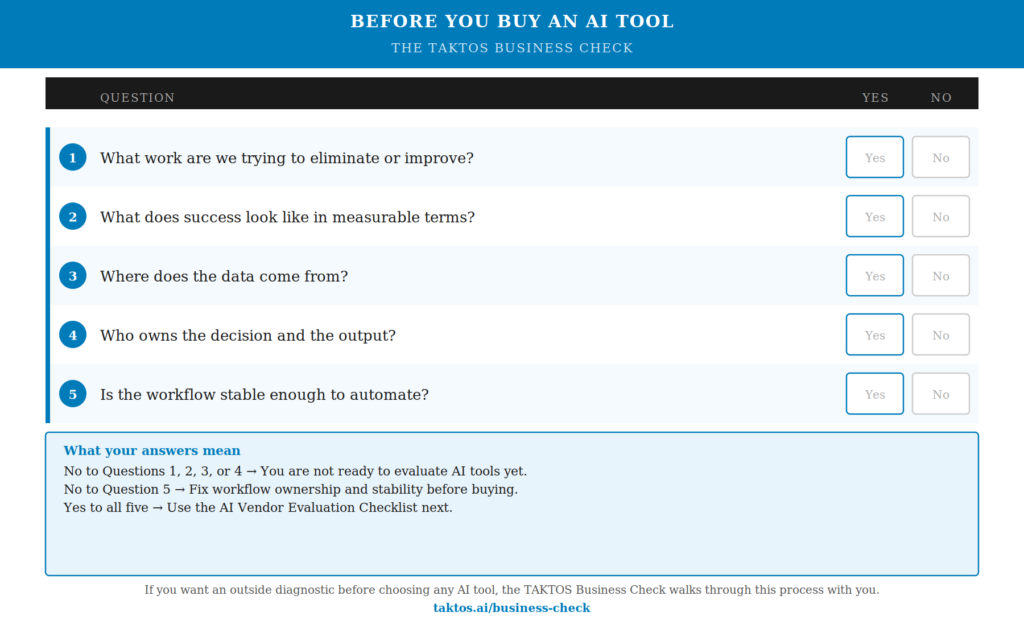

Before any vendor evaluation makes sense, answer these five questions about your own business.

Five Questions to Ask Before Buying Any AI Tool

Question 1: What Work Are We Trying to Eliminate or Improve?

Start with a task, not a goal.

Most business owners say they want to be more efficient, reduce manual work, or save time. Those are goals. They are not starting points. You cannot point AI at a goal.

Describe the work in plain language. Who performs it. How long it takes each time it runs. Where errors happen. What the output is supposed to look like.

If you can state it in one sentence, you have something to work with. “Our office manager spends five hours per week pulling project cost data from three separate systems and formatting it into a weekly summary report.” That is a specific task. That is where the analysis starts.

If your answer requires three sentences to get to the actual work, keep narrowing. The more specific the task, the faster you can assess whether any tool is worth testing.

AI tools are designed to operate on defined inputs and defined outputs. Vague input produces vague output. This question sets the foundation for every decision that follows.

Question 2: What Does Success Look Like in Measurable Terms?

Define the outcome before selecting software.

This is where most buyers skip an important step. They identify the problem, look at tools, and assume the tool will deliver improvement. But improvement against what? If there is no baseline, there is no way to know whether anything changed.

Set a specific target. Some examples:

Time: Reduce proposal drafting time from 90 minutes to 20.

Response: Cut average customer response time from two days to four hours.

Accuracy: Reduce data entry errors in weekly reports from twelve per batch to zero.

The target does not have to be perfect on day one. It just has to be specific enough that you will know in 30 days whether the tool is working.

Without a measurable target, AI adoption drifts. The tool gets configured, people use it inconsistently, and the business has no way to evaluate whether the investment was worthwhile. A number forces accountability on both sides: the tool and the team using it.

Question 3: Where Does the Data Come From?

AI systems require input. That input has to come from somewhere.

Most small businesses have fragmented records. Customer notes in email threads. Pricing in spreadsheets. Contracts in shared drives. Field reports in text messages. Job histories in someone’s memory.

Before evaluating any tool, map where the information lives for the task you identified in Question 1. Is it in one system or several? Is it consistently structured, or does every entry look different? Can you retrieve it without asking a specific person?

You do not need a sophisticated database. You need the information to exist somewhere accessible and consistent. If the answer to “where does this data live” takes more than two steps to explain, you have a data organization problem that precedes any tool decision.

This is not a technology problem. It shows up in businesses of every size and industry. The business has been running on informal systems that rely on institutional memory. Those systems work until they do not. A tool layered on top of disorganized data will produce disorganized output. This question surfaces that gap before you spend money on something that cannot work.

Question 4: Who Owns the Decision and the Output?

AI produces drafts, summaries, and recommendations. Someone must review and approve the result. Identify that person before you buy anything.

Define three things:

Who checks the output. This person reviews whatever the tool produces before it enters a workflow or reaches a customer.

Who accepts responsibility for errors. If the AI-generated summary contains a mistake, who owns that and corrects it?

Where the output enters the workflow. At what point does the AI output become an input to the next step in the process?

This person does not need to be technical. They need to be accountable. In a small business, the owner often assumes this defaults to them. That is fine, provided the owner also has the attention to follow through. If the honest answer is “we will figure that out after we buy it,” adoption will stall.

Shared ownership is the most common reason AI projects drift. Everyone agrees it is a good idea. Nobody is responsible for making sure it actually runs. Two months later, the tool is configured, nobody is using it, and the original problem is unchanged.

Name the person before the purchase. If you cannot, that is the first problem to solve.

Question 5: Is the Workflow Stable Enough to Automate?

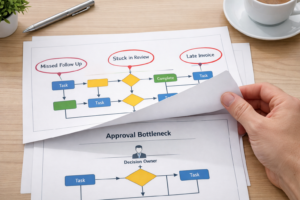

Automation accelerates whatever process already exists. If the process is broken or inconsistent, automation breaks faster and at greater cost.

Ask whether the task you identified in Question 1 runs the same way every time. Same steps. Same sequence. Same output format. If the answer is yes, it is a candidate for automation. If the answer is “it depends on who does it” or “we adjust it based on the situation,” you do not have an automation problem yet. You have a process standards problem.

Document the workflow first. Write down each step. Note where decisions happen, where information is gathered, and where the output goes. If that documentation reveals inconsistency, fix the inconsistency before introducing a tool.

This sequence matters because tools amplify what is already there. A consistent process, automated well, becomes faster and more reliable. An inconsistent process, automated, becomes inconsistently wrong at scale.

Process before automation. Every time.

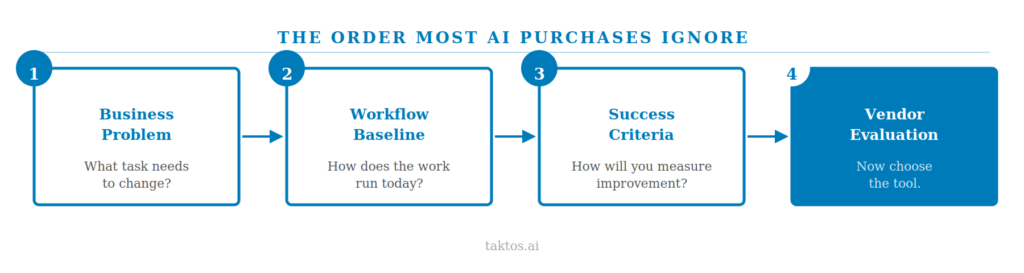

The Order Most AI Purchases Ignore

Most AI buying guides start with vendor comparison. This one starts with your operations. That is not an accident.

The correct sequence is:

1. Business problem. What specific task is costing you time or producing errors?

2. Workflow baseline. How does that task run today, who does it, and what does the output look like?

3. Success criteria. What specific, measurable improvement would confirm that a tool is working?

4. Vendor evaluation. Given the above, which tools are actually designed to solve this problem?

Most buyers invert this order. They start at step four and work backward, trying to make a tool fit a problem they have not fully defined. That is how businesses end up with subscriptions they do not use.

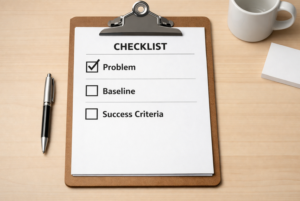

These five questions enforce the correct order. They are not a checklist. They are a diagnostic. Each one either clears you to move forward or surfaces a gap that needs to be addressed before any tool conversation starts.

If you answered all five questions clearly, you are ready for step four.

If one or more answers were unclear, you now know where to start. Fix the gap. The tool decision becomes easier afterward, and the adoption outcome is far more likely to hold.

How to Evaluate AI Tools for Business Before Comparing Vendors

Once you have answered the five questions, the evaluation process becomes straightforward. You are no longer comparing tools in the abstract. You are comparing tools against a specific task, a measurable target, a defined data source, a named owner, and a documented process.

That context eliminates most tools from consideration immediately. The remaining candidates can be evaluated on one question: is this tool designed to operate on the kind of input you have, and produce the kind of output you need?

That is a much sharper question than “which tool has the best features.”

AI Buying Checklist for Small Business Owners

If you want a structured framework for evaluating specific tools after completing this diagnostic work, download the AI Vendor Evaluation Checklist at taktos.ai/resources. It walks you through the evaluation criteria that apply once your operational questions are answered.

Work Through This with an Outside Perspective

If you want a structured outside diagnostic before committing budget or time to any AI tool, the TAKTOS Business Check is designed for exactly this.

It is a structured engagement that examines your operations, identifies where the real gaps are, and produces a clear recommendation: proceed with AI adoption, fix foundational issues first, or hold. No tool recommendations. No implementation. Just a clear answer to whether your business is ready, and where to start if it is.

Learn more at taktos.ai/businesscheck.

Chuck Rayman is the founder of TAKTOS, an AI advisory and education firm for small businesses. TAKTOS helps owners determine where AI will deliver real value and where it will not. Visit taktos.ai.